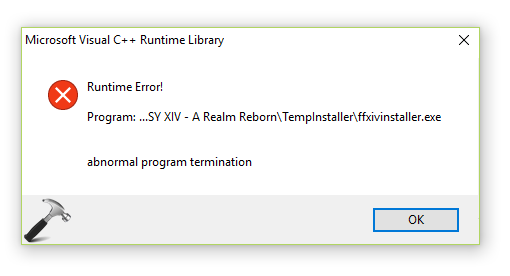

Essentially, the planning step is outsourced to an external tool, assuming the availability of domain-specific PDDL and a suitable planner which is common in certain robotic setups but not in many other domains. In this process, LLM (1) translates the problem into “Problem PDDL”, then (2) requests a classical planner to generate a PDDL plan based on an existing “Domain PDDL”, and finally (3) translates the PDDL plan back into natural language. This approach utilizes the Planning Domain Definition Language (PDDL) as an intermediate interface to describe the planning problem.

2023), involves relying on an external classical planner to do long-horizon planning. "Write a story outline." for writing a novel, or (3) with human inputs.Īnother quite distinct approach, LLM+P ( Liu et al. Task decomposition can be done (1) by LLM with simple prompting like "Steps for XYZ.\n1.", "What are the subgoals for achieving XYZ?", (2) by using task-specific instructions e.g. The search process can be BFS (breadth-first search) or DFS (depth-first search) with each state evaluated by a classifier (via a prompt) or majority vote. It first decomposes the problem into multiple thought steps and generates multiple thoughts per step, creating a tree structure. 2023) extends CoT by exploring multiple reasoning possibilities at each step. CoT transforms big tasks into multiple manageable tasks and shed lights into an interpretation of the model’s thinking process. The model is instructed to “think step by step” to utilize more test-time computation to decompose hard tasks into smaller and simpler steps. 2022) has become a standard prompting technique for enhancing model performance on complex tasks. Task Decomposition #Ĭhain of thought (CoT Wei et al. An agent needs to know what they are and plan ahead. Component One: Planning #Ī complicated task usually involves many steps. Overview of a LLM-powered autonomous agent system.

Short-term memory: I would consider all the in-context learning (See Prompt Engineering) as utilizing short-term memory of the model to learn.Reflection and refinement: The agent can do self-criticism and self-reflection over past actions, learn from mistakes and refine them for future steps, thereby improving the quality of final results.Subgoal and decomposition: The agent breaks down large tasks into smaller, manageable subgoals, enabling efficient handling of complex tasks.In a LLM-powered autonomous agent system, LLM functions as the agent’s brain, complemented by several key components:

The potentiality of LLM extends beyond generating well-written copies, stories, essays and programs it can be framed as a powerful general problem solver. Several proof-of-concepts demos, such as AutoGPT, GPT-Engineer and BabyAGI, serve as inspiring examples. Building agents with LLM (large language model) as its core controller is a cool concept.